Slicer3:Remote Data Handling

Contents

- 1 Uses Cases and Pseudo Code

- 2 Current status of Slicer's (local) data handling

- 3 Goal for how Slicer would upload/download from remote data stores

- 4 TWO Use CASES can drive a first pass implementation

- 5 Current MRML, Logic and GUI Components

- 6 GUI Screenshots

- 7 Target pieces to implement prior to upcoming BIRN Meetings

- 8 pseudocode & notes worked out in 2/14/08 meeting

- 9 ITK-based mechanism handling remote data (for command line modules, batch processing, and grid processing) (Nicole)

- 10 Workflows to support

- 11 What do we want HID webservices to provide?

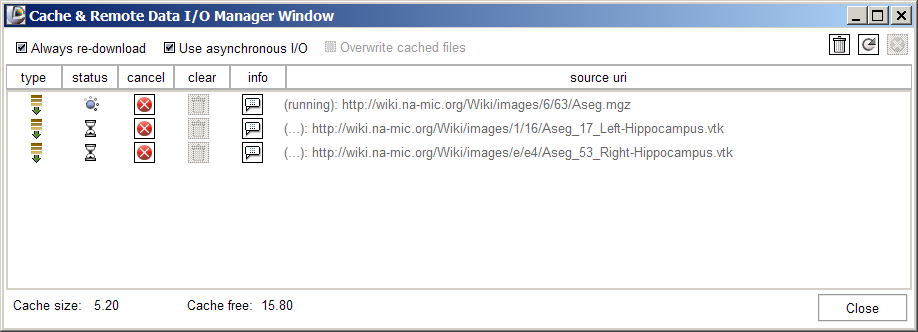

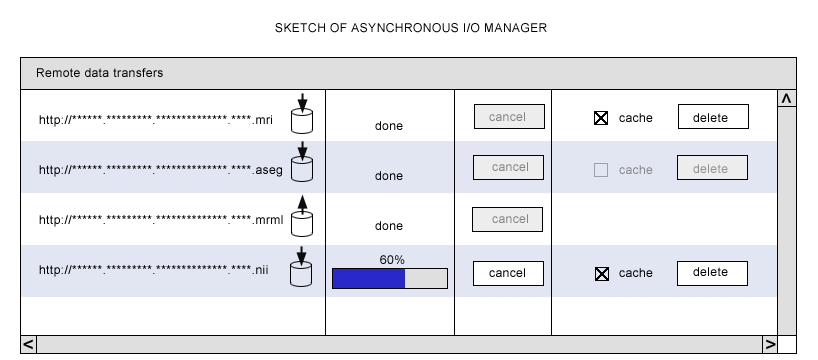

- 12 Slicer's Data I/O Manager

Uses Cases and Pseudo Code

At the March 2008 mBIRN meeting, as set of XCEDE use cases and pseudo code for web services were developed and discussed.

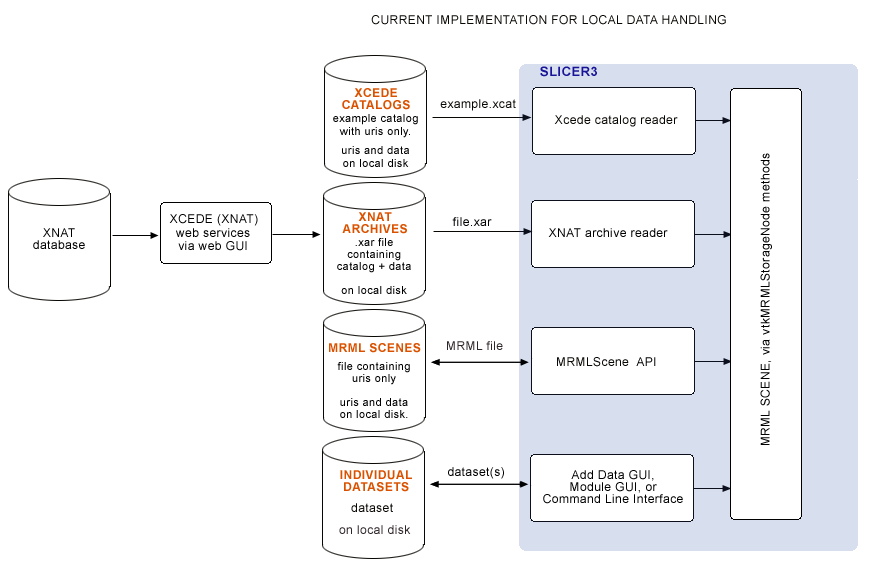

Current status of Slicer's (local) data handling

Currently, MRML files, XCEDE catalog files, XNAT archives and individual datasets are all loaded from local disk. All remote datasets are downloaded (via web interface or command line) outside of Slicer. In the BIRN 2007 AHM we demonstrated downloading .xar files from a remote database, and loading .xar and .xcat files into Slicer from local disk using Slicer's XNAT archive reader and XCEDE2.0 catalog reader. Slicer's current scheme for data handling is shown below:

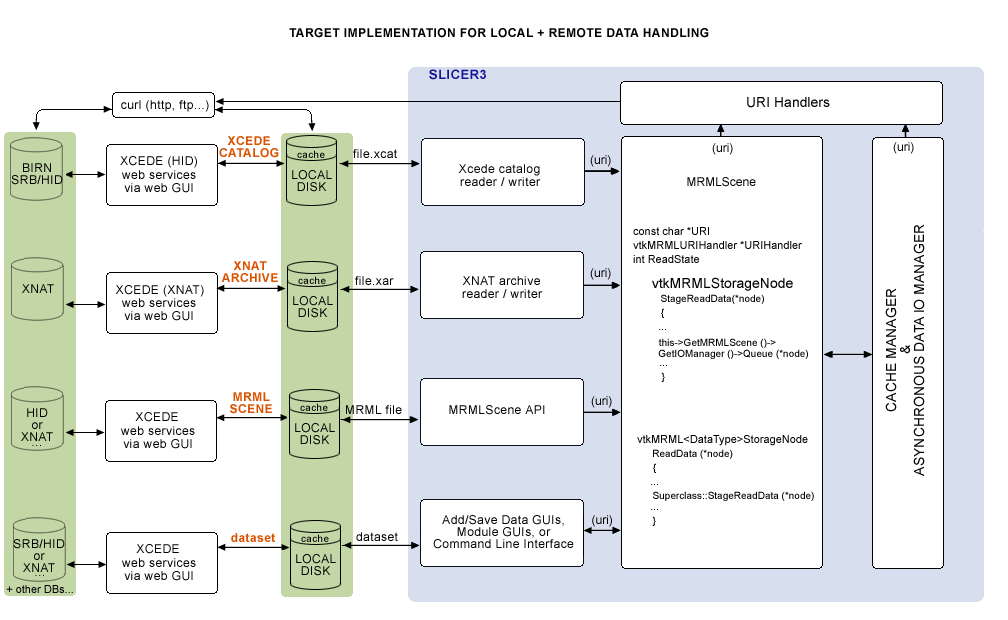

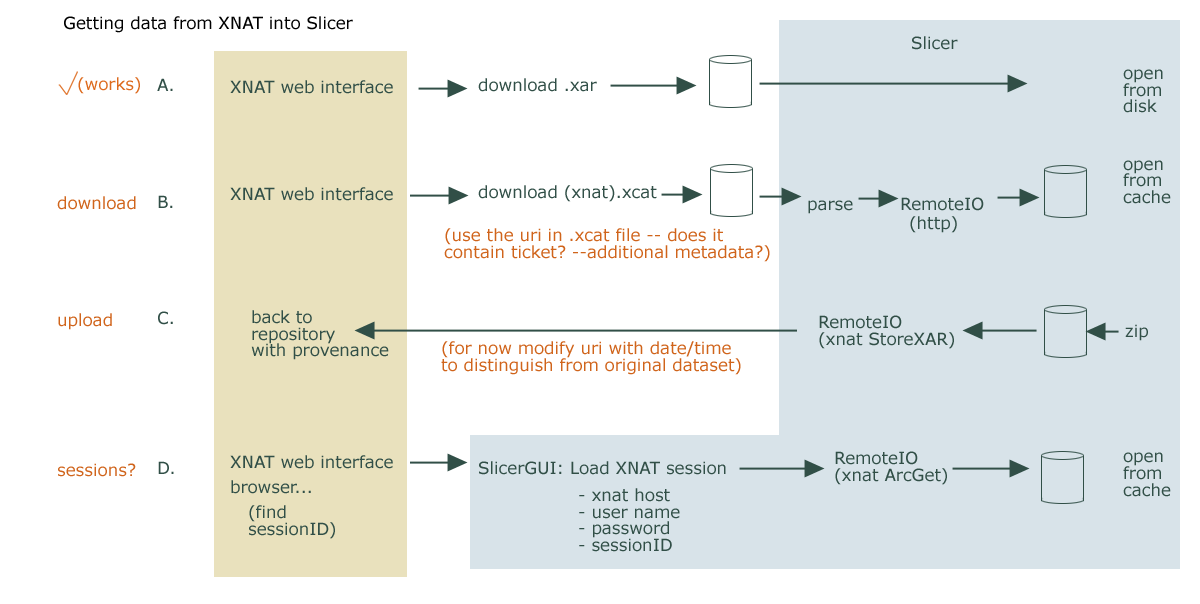

Goal for how Slicer would upload/download from remote data stores

Eventually, we would like to download uris remotely or locally from the Application itself, and have the option of uplaoding to remote stores as well. A sketch of architecture planned in a meeting (on 2/14/08 with Steve Pieper, Nicole Aucoin and Wendy Plesniak) is shown below, including:

- a collection of vtkURIHandlers,

- an (asynchronous) Data I/O Manager and

- a Cache manager,

all created by the main application and pointed to by the MRMLScene.:

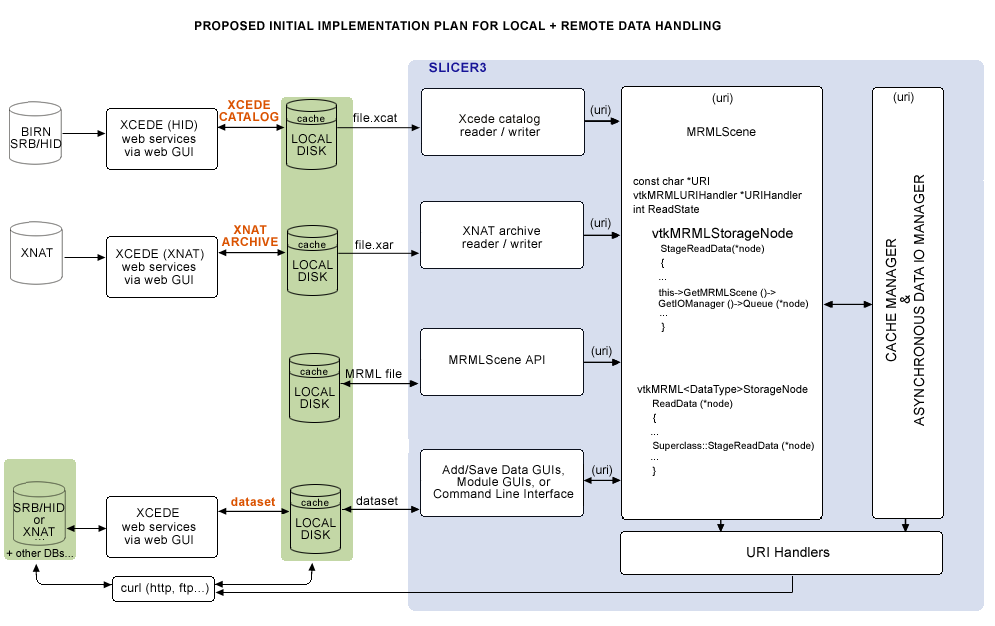

TWO Use CASES can drive a first pass implementation

For BIRN, we'd like to demonstrate two use cases:

- First, is loading a combined FIPS/FreeSurfer analysis, specified in an Xcede catalog (.xcat) file that contains uris pointing to remote datasets, and view this with Slicer's QueryAtlas. (...if we cannot get an .xcat via the HID web GUI, our approach would be to manually upload a test Xcede catalog file and its constituent datasets to some place on the SRB. We'll keep a copy of the catalog file locally, read it and query SRB for each uri in the .xcat file.)

- Second, is running a batch job in Slicer that processes a set of remotely held datasets. Each iteration would take as arguments the XML file parameterizing the EMSegmenter, the uri for the remote dataset, and a uri for storing back the segmentation results.

The schematic of the functionality we'll need is shown below:

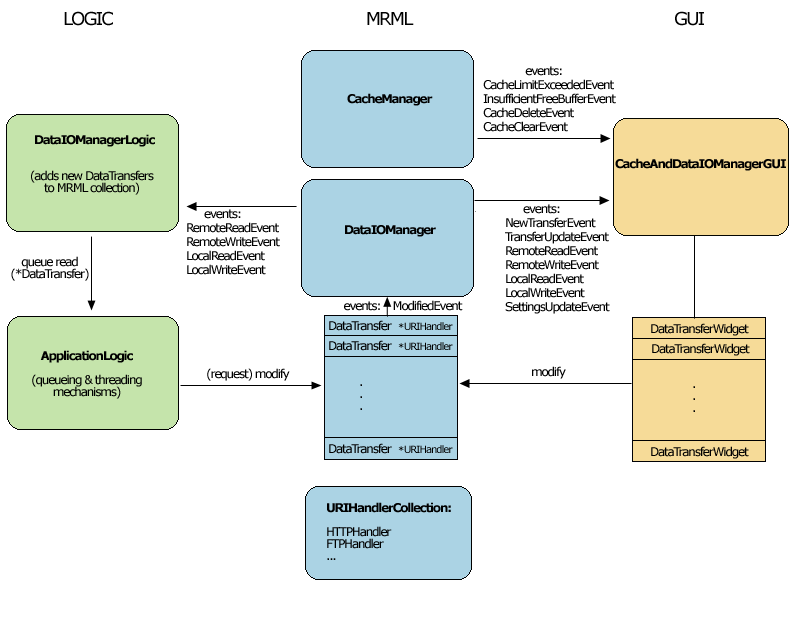

Current MRML, Logic and GUI Components

GUI Screenshots

Target pieces to implement prior to upcoming BIRN Meetings

RemoteIO Library

RemoteIO Library, including the following classes:

- vtkFTPHandler

- vtkHTTPHandler

- vtkSRBHandler

- ...

URIs we want to handle:

Sample URI's:

- http://www.slicer.org/example.mrml

- ftp://user:pw@host:port/path/to/volume.nrrd (read and write)

- http://host/file.vtp (read only)

Test Cases:

- RemoteTest.mrml - points to CTHeadAxial.nrrd (the xml file is there, it may not render in your browser)

- RemoteTestVtk.mrml - points to Aseg_17_Left-Hippocampus.vtk, Aseg_53_Right-Hippocampus.vtk

- RemoteTestMgzVtk.mrml points to the above vtk files as well as Aseg.mgz

- RemoteTestColours.mrml points to Aseg.mgz and the colour file FSColorLabelsTest.ctbl

- RemoteTestMgzVtkPial.mrml points to Aseg.mgz, Aseg_53_Right-Hippocampus.vtk, and lh.pial

- RemoteTestSRBVtk.mrml points to a file on the BIRN SRB /home/naucoin.harvard-bwh/aseg_17_left-hippocampus.vtk (for now, requires that the uri string start with srb://) You must install and set up the SRB clients following the instructions here.

- fBIRN-AHM2007.xcat a sample xcat file pointing to files on http://slicerl.bwh.harvard.edu/data/fBIRN (must download manually)

- RemoteBertSurfAndOverlays.mrml points to files on http://slicerl.bwh.harvard.edu/data, tests new overlay storage nodes

- fBIRN-AHM2008-SRB.xcat points to files on the BIRN SRB /home/Public/FIPS-FreeSurfer-XCAT (for now, requires that the uri string start with srb://) You must install and set up the SRB clients following the instructions here.

XNAT questions

Questions:

Essentially the process I want to be able to do is:

- query xnat using tags and get back xcat

- have xcat include subject id which can be used to request more subject details

- set/tag a tag on a subject / project / session / scan / reconstruction...

- construct a valid xar for StoreXAR that the right IDs to put it in Additional Resources section of a Session for future download.

From the Slicer application, I'd like to make sure it's as simple as

possible to load data from an xnat db, and to save it back,

somehow annotated with whatever was done to it during the Slicer session.

There may be multiple ways we want to download/upload data, but we'll

focus on a common workflow first.

Download:

I think we're assuming that users will commonly download a .xcat file from an xnat host's web interface to local disk. That file will be parsed by Slicer and all remote references will be downloaded and opened by the app.

- My understanding is that the .xcat file will contain a uri value that

http curl can get, and satisfactory 'ticket' information, -- if that's so, we're good for remote loading.

- If the uri value has invalid ticket information, I think we may want to

prompt for username, password, xnat host, apply for new (or reactivate old) ticket string, and re-create the uri. It would be good to have a prescription for how to do this.

- (We may want richer metadata in the .xcat file for the sake

of data provenance, and Nicole may have more specific suggestions on this topic.)

Upload:

I'm guessing we'll commonly use Slicer's save data widget to save the scene or selected datasets. If a dataset came from an xnat db, as a first pass, we'll want to store the data back by default. We'll need to:

- mark up data using XCEDE schema (do we know how?),

- we'll need to write new tags to track provenance (our own tags?),

- change names of files to avoid overwriting originals (is there a favored strategy?)

MRML extensions

MRML-specific implementations and extensions of the following classes:

- vtkDataIOManager

- vtkMRMLStorageNode methods

- vtkMRML<DataType>StorageNode methods

- vtkPasswordPrompter

- vtkDataTransfer

- vtkCacheManager

- vtkURIHandler

GUI extensions

GUI implementations of the following classes:

- vtkSlicerDataTransferWidget

- vtkSlicerCacheAndDataIOManagerGUI

- vtkSlicerPasswordPrompter

Logic extensions

- vtkDataIOManagerLogic

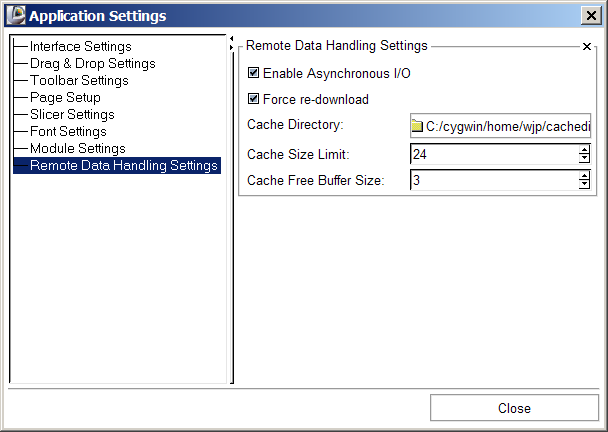

Application Interface extensions

- Set CacheDirectory default = Slicer3 temp dir

- Set CacheFreeBufferSize default = 10Mb

- Set CacheLimit default = 20Mb

- Enable/Disable CacheOverwriting default = true

- Enable/Disable ForceRedownload default = false

- Enable/Disable asynchronous I/O default = false

- Instance & Register URI Handlers (?)

Important Notes

- MRML Scene file reading will always be synchronous.

- Read/write of individual datasets referenced in the scene should work with or without the asynchronous read/write turned on, and with or without the dataIOManager GUI interface.

pseudocode & notes worked out in 2/14/08 meeting

MRMLScene

// example: pseudocode for reading remote mrml scene, // parsing and loading data synchronously or asynchronously. //------------------------------------------------------------ //-- hypothetical dataset: http://*/example.mrml //------------------------------------------------------------ //--- In Slicer3.cxx main application //--- Create Application and read registry //--- Create MRML scene vtkMRMLScene *scene = vtkMRMLScene::New(); //--- create cache manager CacheManager = vtkCacheManager::New(); CacheManager->Configure(); //--- create asynchronous IO manager DataIOManager = vtkMRMLDataIOManager::New(); DataIOManager->Configure(); //--- create uri handler collection and populate URIHandlerCollection = vtkCollection::New() //--- discover and instance all vtkURIHandlers and add them to collection //--- set members of mrml scene scene->SetCacheManager (CacheManager); scene->SetDataIOManager (DataIOManager); scene->SetURIHandlerCollection ( URIHandlerCollection ); scene->SetURI ( *uri ); //--- parse scene file and load data MRMLScene::Connect() { filename = GetCacheManager()->GetFilenameFromURI ( *uri ); handler = this->FindURIHandler ( *uri ); handler->StageFile ( *uri, *filename ); //… //--- synchronous read until we have the file on disk //… //--- then parse parser = vtkMRMLParser::New(); parser->SetMRMLScene ( this); parser->Parse(); //--- and create nodes //… { vtkMRML<DataType>StorageNode->ReadData(); }

Note:

- scene->GetRootDir() should return a uri

- When scene->Delete() is called:

if (CacheManager != NULL )

{

CacheManager->Delete();

CacheManager = NULL;

}

if (DataIOManager != NULL )

{

DataIOManager->Delete();

DataIOManager = NULL;

}

//--- and clean up the URIHandlerCollection here too?

vtkMRMLStorageNode class

State flag names: READY and PENDING

vtkMRMLStorageNode::StageReadData ( vtkMRMLNode *node )

{

//--- checking to see if URI, Scene or node are null

...

//--- Then stage the read

vtkMRMLDataIOManager *iomanager = this->GetMRMLScene()->GetDataIOManager();

int asynch = 0;

if ( iomanager != NULL )

{

asynch = iomanager->GetAsynchronousEnabled();

}

if ( iomanager != NULL && asynch && this->GetReadState() != this->PENDING )

{

this->SetReadStatePending();

//--- set up the data handler

this->URIHandler = this->Scene->FindURIHandler ( this->URI );

iomanager->QueueRead (node);

}

else

{

//--- do the synchronous read here if we have a URIHandler?

//--- get cacheFileName

...

if (this->URIHandler)

{

this->URIHandler->StageFileRead ( this->URI, cacheFileName );

this->SetReadState(this->DONE);

}

else

{

//--- error message

return;

}

}

}

Another thing we can do here is to let the DataIOManager do all the URIHandler stuff, either asynchronously or synchronously, since it has the flag that sets either IO mode. So an alternative to the if() statement above would be:

vtkMRMLStorageNode::StageReadData (vtkMRMLNode *node )

{

...

...

...

if ( iomanager != NULL && this->GetReadState() != this->PENDING )

{

this->SetReadStatePending();

//--- set up the data handler

this->URIHandler = this->Scene->FindURIHandler ( this->URI );

//--- DataIOManager will either schedule asynchronous read,

//--- or will execute the URIHandler->StageReadFile() method

//--- synchronously (depending on its EnableAsynchronousIO flag

//--- and return.

iomanager->QueueRead (node);

if ( !iomanager->GetEnableAsynchronousIO() )

{

this->SetReadState(this->DONE);

}

}

}

vtkMRMLStorageNode::StageWriteData ( vtkMRMLNode *node )

{

// similar to above

}

Notes:

- Need progress feedback -- vtkFilterWatcher on handler?

vtkMRML<DataType>StorageNode classes

vtkMRML<DataType>StorageNode::ReadData ( vtkMRMLNode *node )

{

Superclass::StageReadData ( node );

if ( this->GetReadState() == PENDING )

{

return 0;

}

else

{

Read;

}

vtkMRML<DataType>StorageNode::WriteData ( vtkMRMLNode *node)

{

// figure out logic wrt checking GetWriteState

if (this->GetWriteState() != PENDING)

{

Write to file;

}

SuperClass::StageWriteData(node);

}

vtkCacheManager class

vtkCachedFileCollection = vtkCollection::New();

vtkDataIOManager (MRML)

In vtkDataIOManager class:

This class is basically a container for all data transfers and provides

- some convenience methods for accessing data transfers and their info

- and Get/Set access to manage the Synchronous versus Asynchronous data IO state.

- Invokes a RemoteReadEvent, or RemoteWriteEvent with the node as calldata, which the vtkDataIOManagerLogic is listening for and will process.

vtkDataIOManager::QueueRead ( vtkMRMLNode *node )

{

//--- creates a vtkDataTransferObject, sets values, adds to collection

this->AddDataTransfer( node );

//--- if ForceReUpload flag is set:

this->DeleteFileInCache ( );

handler = node->GetStorageNode()->GetURIHandler();

//--- Invokes a vtkDataIOManager::RemoteReadEvent and sends the node as calldata

this->InvokeEvent ( vtkDataIOManager::RemoteReadEvent, node );

}

vtkDataIOManagerLogic (Base/Logic)

In vtkDataIOManagerLogic class:

This class either does the work of queueing data transfers for asynchronous download/upload, or performs download/upload in a blocking fashion depending on the state of the DataIOManager's EnableAsynchronousIO flag. It uses the ApplicationLogic's scheduling mechanisms, and spawns a new thread for each requested asynchronous data transfer.

vtkDataIOManagerLogic::ProcessMRMLEvents ( vtkObject* caller, unsigned long event, void *calldata)

{

if ( this->DataIOManager == vtkDataIOManager::SafeDownCast ( caller ) )

{

//--- unpack the calldata into a vtkMRMLNode *node...;

if ( event == vtkDataIOManager::RemoteReadEvent )

{

this->QueueRead ( node );

}

else if ( event == vtkDataIOManager::RemoteWriteEvent )

{

this->QueueWrite ( node );

}

}

}

vtkDataIOManagerLogic::QueueRead ( vtkMRMLNode *node)

{

//--- do some null checking on node,

...

//--- safedowncast the node into a vtkMRMLDisplayableNode *dnode

...

vtkURIHandler *handler = dnode->GetStorageNode()->GetURIHandler();

//--- null check

...

const char *source = dnode->GetStorageNode()->GetURI();

const char *dest = this->GetDataIOManager()->GetCacheManager()->GetFilenameFromURI(source);

//---

//--- create a new data transfer and stuff it full of all the information associated

vtkDataTransfer *transfer = this->GetDataIOManager()->AddNewDataTransfer ( node );

//--- maybe move the above method into the logic class?

if ( transfer == NULL )

{

return 0;

}

transfer->SetSourceURI(source);

transfer-SetDestinationURI (dest );

transfer->SetHandler(handler);

transfer->SetTransferType ( vtkDataTransfer::RemoteDownload);

transfer->SetStatus ( vtkDataTransfer::Unspecified );

//---

//--- if the transfer is ASYNCHRONOUS, Schedule it into

//--- the ApplicationLogic's task processing pipeline...

//---

if ( this->GetDataIOManager()->GetEnableAsynchronousIO() )

{

vtkSlicertask *task = vtkSlicerTask::New();

task->SetTaskFunction( this, (vtkSlicerTask::TaskFunctionPointer)

&vtkDataIOManagerLogic::ApplyTransfer, transfer);

bool ret=0;

if ( ret = this->GetApplicationLogic()->ScheduleTask(task))

{

transfer->SetTransferStatus ( vtkDataTransfer::Scheduled );

}

task->Delete();

if (!ret )

{

return 0;

}

}

//---

//--- if the transfer is SYNCHRONOUS... just execute it

//---

else

{

this->ApplyTransfer ( transfer );

}

return 1;

}

...

}

And the ApplyTransfer() method that gets either scheduled or called synchronously, which contains all the URI handling:

vtkDataIOManagerLogic::ApplyTransfer ( void *clientdata )

{

//--- cast the clientdata into a vtkDataTransfer

vtkDataTransfer *dt = reinterpret_cast <vtkDataTransfer*> (clientdata);

if ( dt == NULL ) return;

//---

//--- get the node

//---

vtkMRMLNode *node = this->GetMRMLScene()->GetNodeByID((dt->GetTransferNodeID()));

if ( node == NULL ) return;

//---

//--- download data

//---

const char *source = dt->GetSourceURI();

const char *dest = dt->GetDestinationURI();

if ( dt->GetTransferType() == vtkDataTransfer::RemoteDownload )

{

vtkURIHandler *handler = dt->GetHandler();

if ( this->GetDataIOManager()->GetEnableAsynchronousIO())

{

//---

//--- THIS IS BEING EXECUTED IN A SEPARATE THREAD.

//---

handler->StageFileRead(source, dest);

this->GetApplicationLogic()->RequestReadData(node->GetID(), dest, 0, 0);

}

else

{

//---

//--- THIS IS BEING EXECUTED IN THE MAIN THREAD.

//---

handler->StageFileRead (source, dest);

}

}

...

}

...

}

ITK-based mechanism handling remote data (for command line modules, batch processing, and grid processing) (Nicole)

This one is tenatively on hold for now.

Workflows to support

The first goal is to figure out what workflows to support, and a good implementation approach.

Currently, Load Scene, Import Scene, and Add Data options in Slicer all encapsulate two steps:

- locating a dataset, usually accomplished through a file browser, and

- selecting a dataset to initiate loading.

Then MRML files, Xcede catalog files, or individual datasets are loaded from local disk.

For loading remote datasets, the following options are available:

- break these two steps apart explicitly (easiest option),

- bind them together under the hood,

- or support both of these paradigms.

For now, we choose the first option.

workflows:

Possible workflow A

- User downloads .xcat or .xml (MRML) file to disk using the HID or an XNAT web interface

- From the Load Scene file browser, user selects the .xcat or .xml archive. If no locally cached versions exist, each remote file listed in the archive is downloaded to /tmp directory (always locally cached) by the Data I/O Manager, and then cached (local) uri is passed to vtkMRMLStorageNode method when download is complete.

Possible workflow B

- User downloads .xcat or .xml (MRML) file to disk using the HID or an XNAT web interface

- From the Load Scene file browser, user selects the .xcat or .xml archive. If no locally cached versions exist, each remote file in the archive is downloaded to /tmp (only if a flag is set) by the Data IO Manager, and loaded directly into Slicer via a vtkMRMLStorageNode method when download is complete. (How does load work if we don't save to disk first?)

Workflow C

- describe batch processing example here, which includes saving to local or remote.

In each workflow, the data gets saved to disk first and then loaded into StorageNode or uploaded to remote location from cache.

What data do we need in an .xcat file?

For the fBIRN QueryAtlas use case, we need a combination of FreeSurfer morphology analysis and a FIPS analysis of the same subject. With the combined data in Slicer, we can view activation overlays co-registered to and overlayed onto the high resolution structural MRI using the FIPS analysis, and determine the names of brain regions where activations occur using the co-registered morphology analysis.

The required analyses including all derived data are in two standard directory structures on local disk, and *hopefully* somewhere on the HID within a standard structure (check with Burak). These directory trees contain a LOT of files we don't need... Below are the files we *do* need for fBIRN QueryAtlas use case.

FIPS analysis (.feat) directory and required data

For instance, the FIPS output directory in our example dataset from Doug Greve at MGH is called sirp-hp65-stc-to7-gam.feat. Under this directory, QueryAtlas needs the following datasets:

- sirp-hp65-stc-to7-gam.feat/reg/example_func.nii

- sirp-hp65-stc-to7-gam.feat/reg/freesurfer/anat2exf.register.dat

- sirp-hp65-stc-to7-gam.feat/stats/(all statistics files of interest)

- sirp-hp65-stc-to7-gam.feat/design.gif (this image relates statistics files to experimental conditions)

FreeSurfer analysis directory, and required data

For instance, the FreeSurfer morphology analysis directory in our example dataset from Doug Greve at MGH is called fbph2-000670986943. Under this directory, QueryAtlas needs the following datasets:

- fbph2-000670986943/mri/brain.mgz

- fbph2-000670986943/mri/aparc+aseg.mgz

- fbph2-000670986943/surf/lh.pial

- fbph2-000670986943/surf/rh.pial

- fbph2-000670986943/label/lh.aparc.annot

- fbph2-000670986943/label/rh.aparc.annot

What do we want HID webservices to provide?

- Question: are FIPS and FreeSurfer analyses (including QueryAtlas required files listed above) for subjects available on the HID yet? --Burak says not yet.

- Given that, can we manually upload an example .xcat and the datasets it points to the SRB, and download each dataset from the HID in Slicer, using some helper application (like curl)?

- (Eventually.) The BIRN HID webservices shouldn't really need to know the subset of data that QueryAtlas needs... maybe the web interface can take a BIRN ID and create a FIPS/FreeSurfer xcede catalog with all uris (http://....) in the FIPS and FreeSurfer directories, and package these into an Xcede catalog.

- (Eventually.) The catalog could be requested and downloaded from the HID web GUI, with a name like .xcat or .xcat.gzip or whatever. QueryAtlas could then open this file (or unzip and open) and filter for the relevant uris for an fBIRN or Qdec QueryAtlas session.

- Then, for each uri in a catalog (or .xml MRML file), we'll use (curl?) to download; so we need all datasets to be publicly readable.

- Can we create a directory (even a temporary one) on the SRB/BWH HID for Slicer data uploads?

- We need some kind of upload service, a function call that takes a dataset and a BIRNID and uploads data to appropriate remote directory.